In my post last week I talked about the importance of representative samples for making universal statements, including averages and percentages. But how big should your sample be? You don’t need to look at everything, but you probably need to look at more than one thing. How big a sample do you need in order to be reasonably sure of your estimates?

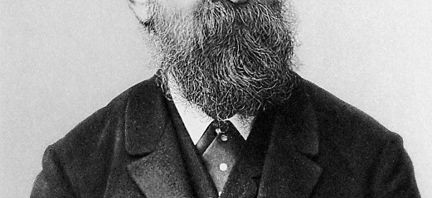

One of the pioneers in this area was a mysterious scholar known only to the public as Student. He took that name because he had been a student of the statistician Karl Pearson, and because he was generally a modest person. After his death, he was revealed to be William Sealy Gosset, Head Brewer for the Guinness Brewery. He had published his findings (PDF) under a pseudonym so that the competing breweries would not realize the relevance of his work to brewing.

Pearson had connected sampling to probability, because for every item sampled there is a chance that it is not a good example of the population as a whole. He used the probability integral transformation, which required relatively large samples. Pearson?s preferred application was biometrics, where it was relatively easy to collect samples and get a good estimate of the probability integral.

The Guinness brewery was experimenting with different varieties of barley, looking for ones that would yield the most grain for brewing. The cost of sampling barley added up over time, and the number of samples that Pearson used would have been too expensive. Student?s t-test saved his employer money by making it easy to tell whether they had the minimum sample size that they needed for good estimates.

Both Pearson?s and Student?s methods resulted in equations and tables that allowed people to estimate the probability that the mean of their sample is inaccurate. This can be expressed as a margin of error or as a confidence interval, or as the p-value of the mean. The p-value depends on the number of items in your sample, and how much they vary from each other. The bigger the sample and the smaller the variance, the smaller the p-value. The smaller the p-value, the more likely it is that your sample mean is close to the actual mean of the population you?re interested in. For Student, a small p-value meant that the company didn’t have to go out and test more barley crops.

Before you gather your sample, you decide how much uncertainty you?re willing to tolerate, in other words, a maximum p-value designated by α (alpha). When a sample?s p-value is lower than the α-value, it is said to be significant. One popular α-value is 0.05, but this is often decided collectively, and enforced by journal editors and thesis advisors who will not accept an article where the results don?t meet their standards for statistical significance.

The tests of significance determined by Pearson, Student, Ronald Fisher and others are hugely valuable. In science it is quite common to get false positives, where it looks like you?ve found interesting results but you just happened to sample some unusual items. Achieving statistical significance tells you that the interesting results are probably not just an accident of sampling. These tests protect the public from inaccurate data.

Like every valuable innovation, tests of statistical significance can be overused. I?ll talk about that in a future post.