I’ve always been uncomfortable around historical re-enactors at museums – people who are walking around in period costume while visitors in modern clothes are browsing the exhibits. I’m sure most of them are nice people, I get the idea that seeing people in period costume can help bring the past to life for visitors, and I’m happy if they talk to me as presenters (“I’m dressed as so-and-so, who was enslaved…”). But I can’t have a conversation with them in character.

I understand performance; I did my PhD on theater. I can watch actors on screen delivering lines and learn from them, or be moved and entertained by their performances. I’ve performed on stage a bit. I’m very familiar with the suspension of disbelief that comes with performance.

I even understand that there’s a bit of performance in many interactions, from museum guides to teaching to customer service, even with friends and family. And often there’s suspension of disbelief involved.

So why do I get so uncomfortable talking to historical re-enactors? Because it’s not clear who I’m performing for. I don’t have a role, unless it’s time traveler from the twenty-first century. If there are other visitors to the museum, and I think for some reason they’d benefit from seeing the historical character interact with a time traveler, sure I’d consider it. But if it’s just me and the presenter, and maybe a friend or family member of mine? The presenter has seen these performances hundreds of times. How does that fit in their job description?

But this post is not about historical re-enactors, it’s about “AI” – chatbots, large language models. And just as I don’t want to talk to a historical re-enactor in character, I don’t want to pretend I’m having a conversation with a chatbot.

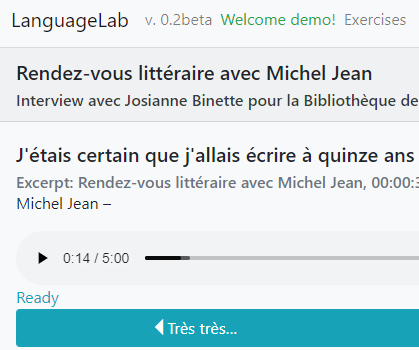

I program computers for a living, and one thing I do is make chatbots. The most successful one doesn’t pretend to be a customer service agent, or a personal assistant, or an omniscient oracle. It just takes commands, like “summary for 123456” where 123456 is the number of a ticket in our customer service tracking system.

This is because the program has a function: it shows you summaries for tickets. It doesn’t think, it doesn’t have feelings, it doesn’t care if you’re nice or clever or concise. It just waits for input and provides an output.

And I know, because I used to work on language models, that all chatbots are programs like the ones I write every day. They wait for input and provide an output. The difference with large language models is that they’re “fancy autocomplete” – as Emily Bender calls it. LLMs like ChatGPT are programmed to examine a chat and provide the most likely response.

So why do chatbots often write things that look like they care? Why do they sometimes seem to be impressed or have other feelings about what people write to them? Because those are the most common responses they find in their training corpora. Just like the historical re-enactors are paid (or volunteer) to perform, the chatbots are programmed to perform.

And similar to the way historical re-enactors in museums aren’t paid to care about other people’s performances, chatbots aren’t programmed to care about the performance of the people who type things at them. Anything that looks like caring is itself a performance.

So what is the point of pretending that the chatbot is a customer service agent, or a personal assistant? If anyone ever reads my chat transcripts, they’re not going to do it to appreciate how well I play the role of a customer, or a VIP. If I want to perform for myself, I’ve got better ways of doing it.

I’m aware that there’s a whole industry of “prompt engineering” that’s developed in the past few years. It’s more like prompt guesswork: trial and error of various combinations of input text with the goal of getting the language model to produce a particular output, and no guarantee that the prompts will work on any other models, or even other versions of the same model. If I thought that there was some great value to be gotten from the model’s output I might try it, but I haven’t heard about anything worth performing this awkward pantomime for.