A few days ago, Byron Ahn drew our attention to an excerpt from a new, six-hour audiobook, Inside Voice by Lake Bell, credited as an “actress/writer/director/producer.” Bell is a friend of author and podcaster Malcolm Gladwell, and Gladwell agreed to serve as a kind of sounding board for Bell’s ideas about something she calls “sexy baby voice,” pointing to the voices of Paris Hilton and Kim Kardashian as paradigm examples of it. Gladwell, whose company is publishing Inside Voice, also published this excerpt as a free bonus episode of his podcast Revisionist History, which I listen to regularly, although I’m almost two years behind.

Bell argues for a few points: that what she calls “sexy baby voice” is a distinct speech style with specific audible features, that it is particularly inauthentic (she claims several times that it requires effort to speak that way, and describes a coaching technique for helping women to find their “true” voices) and that it makes them sound stupider than Bell knows them to be. She repeatedly assures us that she is not passing judgment, and then uses extremely judgmental language to describe “sexy baby voice,” which I interpret as an application of “love the sinner, hate the sin.”

Ahn posted a series of Twitter threads about the excerpt. He notes that it’s problematic for Bell to criticize women as a self-identified feminist, but he focuses on the terminology that she uses to describe the features of “sexy baby voice,” particularly the word “pitch.” He concludes, “we should encourage public figures talking about voices to consult linguists who have the training.”

I’ve got a lot of thoughts and feelings about this excerpt and Bell’s idea of “sexy baby voice.” I could probably write several blog posts on the practical, cultural and social angles to this. For this post I’m going to keep with Ahn’s focus on what “sexy baby voice” is, phonetically. I sketched some of this out on Ahn’s Twitter thread, and I’ll synthesize and expand that here.

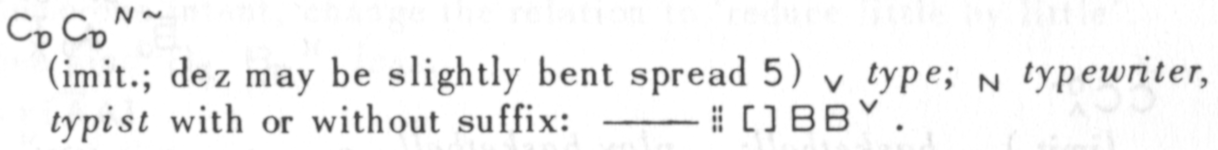

Bell says that the primary feature that defines “sexy baby voice” is “pitch,” and as linguists, we’re trained to interpret “pitch” as the fundamental frequency of the voice – essentially, the lowest pitch produced by the voice at any given time. I’ve been taking singing lessons, and all the singers and singing teachers I’ve talked to use “pitch” in the same way.

Ahn introduces his discussion of the “sexy baby voice” excerpt with a graph of the fundamental frequency of a segment of the recording – throughout the excerpt, Bell uses her own voice to demonstrate the “sexy baby voice” style, even though she says she does not use it in everyday conversation. In the graph he posts, the floor and ceiling of Bell’s fundamental frequency range are not particularly higher when she is using “sexy baby voice” than at other times.

Bell mentions two other factors: “vocal fry” (the linguistic term is “creaky voice”) and “slurring” speech. Ahn speculates that she may be picking up on other factors as well, like “SoCal vowels” or laryngeal constriction. He also acknowledges that “pitch” may refer to other pitch-related features besides fundamental frequency range, such as “uptalk,” a pattern of rising in fundamental frequency at the ends of phrases. Gladwell uses the word “uptalk” when echoing Bell’s explanations, but it’s not clear that he’s referring to phrase-final pitch rise.

So here’s where I come in: my gender expression is fluid, so I’ve been studying differences in vocal quality. When I listen to the samples in the chapter of “sexy baby voice” and … not-sexy-baby-voice (that’s for another post!) given by Bell, both in recordings and her own mimicry, I hear some creaky voice (“vocal fry”), but the main difference I hear is resonance.

This section is going to be a bit of a departure from my normal linguistics blogging, because I have not studied any of the literature on this. My understanding of it comes from practical training, so I don’t know who to cite or credit for any of this besides my teachers, Kristy Bissell and Erin Carney.? Of course, any inaccuracies are most likely due to my misunderstanding of what they’ve tried to teach me!

Resonance is about the pitch of speech, but it’s not about the fundamental frequency. It’s about everything else: the harmonics that result from the way the tones from our vocal folds echo around our bodies and are filtered through different parts of our vocal tracts and nasal passages. Just as plucking a string on an acoustic guitar produces overtones from the guitar body, whenever we arrange our vocal folds to talk or sing we produce overtones: higher pitched frequencies that can harmonize or clash with the fundamental frequency.

There are a ton of things you can do with resonance and it can get really complicated, so let’s focus on the primary resonance difference I’m hearing between Lake Bell’s “sexy baby voice” and the other examples. To me, the “sexy baby voice” examples sound brighter.

Bright and dark are useful terms to evoke the quality of resonance while distinguishing it from fundamental frequency. Bright sounds are ones where we hear more of the higher-pitched harmonics, while in dark sounds the lower harmonics dominate.

As I’ve learned from my teachers, and as Bell demonstrates, there’s a lot we can do with our voices to shift the balance of harmonics towards light or dark, but a substantial part of resonance comes form the structure of our bones, cartilage, muscles and fat. Higher-pitched harmonics tend to come from shorter vocal tracts, smaller nasal cavities, and in general, from smaller bodies. As a result, the voices of smaller people tend to sound brighter.

Testosterone during the teenage years also changes the configuration of our vocal tracts: thickening the vocal folds, making the larynx larger and shifting it lower in the throat. This is why men’s and trans women’s voices tend to sound darker than those of women, girls and prepubescent boys, even when singing the same pitch.

Bodies that see an increase in testosterone after puberty do not get larger or lower larynxes, but do tend to develop thicker vocal folds. This is why many trans men’s voices change, but often sound different from typical men’s voices. It is also, as Bell mentions, why women’s voices often change when they give birth or go through menopause.

As you might have guessed, this is where the “baby” in “sexy baby voice” comes from. Children are smaller than adults and tend to have brighter resonances. It’s also why Bell sees “sexy baby voice” as an exaggerated expression of femininity: women tend to be smaller than men and therefore have brighter voices. Women who haven’t given birth or gone through menopause tend to have brighter voices. Bright resonance suggests youth, femininity and immaturity.

As I mentioned above, there are several things that people can do, consciously or unconsciously, to shift their resonances, and I want to talk about them. I would also love to get into a discussion of the sociopolitical issues that Bell identifies around “sexy baby voice” and women’s voices in general. But this is already pretty long for a blog post, so I’ll save those for another time.